This screenshot from Uber’s driver training video shows the course on which humans practice their role in automated vehicles before heading onto public streets.

A fatal crash with an autonomous Uber has raised questions about how humans should act when robots are in control.

A typical Uber driver has clearly defined responsibilities. Arrive on time, know your route, keep your car clean, and, most importantly, safely deliver your passenger to their destination. Sitting behind the wheel of a self-driving Uber—or any autonomous vehicle, for that matter—is, paradoxically, more complicated. A recent, tragic incident in which a self-driving Uber struck and killed a 49-year-old pedestrian, while a safety driver sat behind the wheel, has stirred up many conversations about blame, regulations, and the overall readiness of autonomous tech. The lingering question, however, is how we humans fit into this picture.

The Tempe Police Department released a 14-second clip of the moments leading up to the fatal Uber crash. It shows an outside video, which includes the victim, as well as an inside view of the cabin, which shows the reaction—or lack of reaction—by the person in the driver’s seat.

What does it mean to be a safety driver?

Both Uber and Lyft (the latter of which hadn’t responded for comment at the time of publication) have dedicated training programs to teach flesh-and-blood people how to act when behind the wheel of a car that drives itself. According to a schedule Uber provided to PopSci.com, the training program includes both theoretical and practical evaluations.

By our reading of these materials, it appears that Uber expects that a driver may sometimes need to take control of the vehicle, but the specific circumstances in which that’s the case are somewhat unclear. While Lyft is more tight-lipped about its onboarding process for new drivers, the company does provide a little more insight about when the human is meant to take over command.

In Lyft’s FAQ about its self-driving program, it states that the “pilots” are “constantly monitoring the vehicle systems and surrounding environment, and are ready to manually take control of the vehicle if an unexpected situation arises.” It goes on to specifically mention complicated traffic patterns, like detours, or humans directing traffic around things like construction.

While Uber’s guidelines may differ from Lyft’s, the concept of constantly monitoring the car’s surroundings has been a keystone in discussions about Uber’s fatal crash. The video appears to show the car’s driver looking down into the cabin rather than out in the direction the car is traveling.

“On two separate occasions, the driver seems to be looking down at something for nearly five seconds,” says Bryant Walker Smith, a leading legal expert in the arena of autonomous vehicle deployment. “At 37 miles per hour, a car covers about 250 feet in 5 seconds.” Average reaction time for a human driver is in the neighborhood of 2.3 seconds, which suggests a driver offering their full attention to the road may have been able to at least attempt to brake or perform an evasive maneuver.

Uber Self-Driving Course

Uber

Hands at 10 and 2

If you watch the Uber video regarding its self-driving pilot training, you can see the person keeps their hands close to the wheel during the trip, a detail the New York Times also included in its reporting about the story. That suggests an active role in the driving process, even though the car is meant to make all of the decisions.

But, the idea of a driver maintaining attention when not actively piloting the vehicle has been a sticking point since cars first started hinting at self-driving tech. Back in 2016, a Tesla got into a fatal collision when its autonomous systems couldn’t differentiate the white panels on the side of a truck from the brightness of the open sky. In that case, however, the driver reportedly didn’t notice—or chose to ignore—the vehicle’s calls for human intervention.

Researchers, including those at the Stanford Center for Automotive Research, have been studying this moment of hand-off between autonomous systems and flesh-and-blood drivers for years, exploring options like haptic feedback in the steering wheel, as well as lights in and around the dashboard to indicate that something is wrong and intervention is required. Of course, all of that is irrelevant if the car doesn’t see trouble coming in the first place.

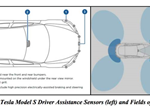

The navigation and self-driving tech in Uber’s vehicles is also a lot more advanced than Tesla’s semi-autonomous Autopilot mode, which isn’t meant to completely replace the need for a driver, and is still in beta according to the company. Uber uses LIDAR, a system that creates a 3D map of the areas surrounding the car using lasers, as well as a typical radar system, and cameras to detect objects before collisions.

On paper, these systems should have been able to detect a pedestrian in the road and send a signal to the driver to take over. While Uber hasn’t confirmed the exact way in which its system reacted just before and during the crash, it doesn’t appear that the driver received a warning—at least not with enough time to intervene.

Uber crash screenshot

The video of the Uber incident from March 18 (which is not linked in this article, but is readily available online if you want to see it) shows the driver looking down into the cabin in the moments before the crash.

Uber

Missed signals

The National Highway and Traffic Safety Administration is currently investigating the Uber crash, and has laid out a set of recommended, but ultimately voluntary, guidlines for states testing autonomous vehicles. In the section about Human Machine Interface, it lays out guidelines for human drivers, most of which involve interpreting signals from the car itself.

It does, however, suggest that truly driverless cars still need monitoring from the “dispatcher or central authority,” which would track the status and condition of the car in real time. In essence, that central authority plays a similar role to a person sitting in the front seat. But a remote operator presumably wouldn’t have a camera pointed at them at the time of an incident, which adds another layer of complexity to the already-muddled topic of blame.

Even when these systems work correctly, handing over controls to a human doesn’t always avoid accidents. In fact, Google found that all of the accidents it experienced in the early days of testing its Waymo self-driving cars, came as a result of human intervention.

Cruz AV

GM’s 2019 autonomous rides won’t have a steering wheel or pedals.

GM

Driver not found

One fact that even further complicates the current conversion about blame is that Arizona’s relaxed guidelines regarding self-driving cars don’t require a human pilot of any kind. Google-owned Waymo is currently operating a self-driving taxi fleet in Arizona with no drivers at all. And GM recently confirmed that it would continue with its plans to test a fleet of its self-driving Cruz AV cars—which don’t have a steering wheel or pedals, precluding humans from giving any input.

Uber has temporarily suspended its autonomous taxi testing during the investigation of last week’s incident, but there’s no indication that the event will affect how these vehicles are governed.

California has allowed self-driving car testing since 2014, but until now, the state has required a driver behind the wheel. A representative from the California DMV provided the following guideline for companies testing on public roads: “A driver must be seated in the driver’s seat, monitoring the safe operation of the autonomous vehicle and capable of taking over immediate manual control of the autonomous vehicle in the event of an autonomous technology failure or emergency.”

On April 2, however, the California DMV will begin issuing permit applications for driverless deployment and testing, but according to a representative, it hasn’t received any applications for that kind of permit yet. The state does not have any intention of changing its outlook on these vehicles in light of the Uber incident.

Giving the people what they want

While truly driverless cars are clearly the endgame of this technology, the companies testing them apparently don’t think passengers are quite ready to step into an empty car and zoom off to their location.

One key responsibility of a Lyft pilot in an autonomous vehicle is to explain some of the technology and the process to passengers as they get in. The drivers aren’t permitted to talk during the ride, but their pre-departure spiel is partially designed to get riders over the mental hump of letting the machine take charge.

A 2016 Cox Automotive survey found that roughly 47 percent of responders said that they feared the computer system in the automated vehicle could fail. In that survey, 27 percent said they wouldn’t be able to fully relax with the AV totally in control.

The fallout

While many experts consider this first human casualty of true self-driving tech to be inevitable, it’s unclear how things will play out when it comes to both rider sentiment and possible regulation. “The mood in some states (especially in the Northeast) and even in Congress could shift somewhat toward a larger supervisory role for governments,” says Cook. “Some developers may support that, either because they need credibility or they want to protect their credibility from less reputable would-be developers.”

According to Tempe Police, there are currently no criminal charges on file toward Uber or the driver that was in the car during the fatal crash. The family of the victim, however, has mentioned the possibility of civil action, though they haven’t specified exactly who will be the target of such a lawsuit.

This nebulous web of rules, regulations, and responsibilities make sitting behind the wheel of a self-driving car more complicated than piloting the vehicle yourself—at least for now.

EDITOR'S PICKS